On August 2, 2026, the majority of obligations under the European AI Act will apply in full to every business using high-risk artificial intelligence systems within the European Union.

Fines can reach up to €35 million or 7% of annual global turnover, whichever is higher.

With five months to go before the deadline, most French SMEs and mid-sized companies have yet to conduct a single audit of their AI systems, even though several obligations have already been in effect since February 2025.

Key takeaways:

- August 2, 2026 is the critical deadline: high-risk AI systems must be compliant, or face fines of up to €35M or 7% of global revenue

- AI-powered recruitment tools, credit scoring systems, and facial recognition are classified as high-risk and subject to strict obligations right now

- The CNIL is France’s designated authority for monitoring and enforcing AI Act compliance

- AI systems deployed before August 2, 2026 benefit from a grandfathering transition clause until 2027, but only if they are not significantly modified

- Six concrete steps to start now: AI inventory, risk classification, technical documentation, human oversight, conformity assessment, CE marking and EU registration

The AI Act’s phased timeline: what is already in force

The European AI Act (EU Regulation 2024/1689) entered into force on August 1, 2024, but its application is being phased in over three years based on the criticality of each use case.

Since February 2, 2025, two categories of obligations already apply to all businesses without exception: the prohibition of explicitly banned AI practices and AI literacy obligations.

Prohibited practices include unconscious behavioral manipulation, generalized social scoring of citizens, and real-time facial recognition in public spaces for law enforcement purposes.

AI literacy obligations require every organization to ensure that employees who use or oversee AI systems have a sufficient level of competence to understand what they are working with.

Since August 2, 2025, the penalties framework has been operational and obligations relating to general-purpose AI models (GPAI, such as GPT-4o, Claude, Gemini) apply to businesses that integrate them into their products or services.

In February 2026, the European Commission published its official guidelines on high-risk system classification, giving businesses a concrete framework to self-assess their tools.

The August 2, 2026 deadline is not limited to large tech companies: any French SME using an AI recruitment, scoring, or biometric analysis tool must be compliant by that date.

A grandfathering clause protects AI systems already on the market before August 2, 2026: these tools benefit from a transition period until August 2, 2027, provided they do not undergo substantial modifications.

Which AI systems in your business are classified as high-risk

Annex III of the AI Act defines the categories of high-risk AI systems that directly concern French businesses.

The classification rests on a single central criterion: does the AI system make or influence decisions that affect people’s fundamental rights or physical safety?

Use cases directly relevant to your organization

In human resources: any AI software used for automatic CV screening, candidate evaluation, performance scoring, promotion or dismissal decisions is classified as high-risk.

In finance and credit: automated credit scoring tools, default risk assessment systems, and loan or insurance approval tools fall into the same category, with documentation and technical audit obligations.

In biometrics: facial recognition, voice-print identification, and remote biometric identification systems are subject to the strictest obligations, including a total ban in public spaces for law enforcement purposes.

HR chatbots, virtual assistants and administrative AI agents are generally not classified as high-risk, but Article 50 requires transparency: users must be informed they are interacting with an AI.

A marketing content generator, writing assistant or automated translation tool does not fall into the high-risk category, unless it directly influences decisions affecting fundamental rights.

Be aware: a business that purchases a high-risk AI tool from a third-party vendor is still considered a “deployer” under the AI Act and must take on the obligations of human oversight and documentation within its own usage context.

The strategic question to ask about every AI tool in your organization: “Does this system influence decisions on employment, credit, access to public services or physical safety?”

If the answer is yes, you are within the scope of Annex III and compliance obligations apply before August 2, 2026.

The fines: €35 million or 7% of global turnover

The AI Act provides for three levels of financial penalties, calibrated according to the severity of the breach and the size of the business.

The most severe level applies to expressly prohibited AI practices: behavioral manipulation, generalized social scoring, unauthorized real-time biometric identification.

Fines in this category reach €35 million or 7% of annual global turnover, whichever is higher.

Non-compliance on high-risk systems (missing documentation, no human oversight, no risk assessment conducted) carries fines of up to €15 million or 3% of global revenue.

Incorrect or misleading information provided to supervisory authorities is subject to fines of up to €7.5 million or 1% of global revenue.

For SMEs and startups, the regulation includes a proportionate approach: fines are capped at the lower amount between the revenue percentage and the fixed ceiling, which reduces financial exposure for smaller organizations.

The penalties framework has been active since August 2, 2025: a business using a prohibited AI practice today can already be subject to a CNIL investigation.

These figures are deliberately aligned with GDPR levels, signaling the intent of European regulators to treat AI compliance with the same rigor as personal data protection.

The six-step action plan to be compliant before August 2026

Law firm Orrick and the European Commission recommend six concrete steps that every organization must complete before August 2, 2026.

Step 1: Conduct a full inventory of all AI systems used across the organization.

Map every tool: HR software with automated scoring, predictive CRM, chatbots, recommendation systems, fraud detection tools, content generators, AI-based cybersecurity solutions.

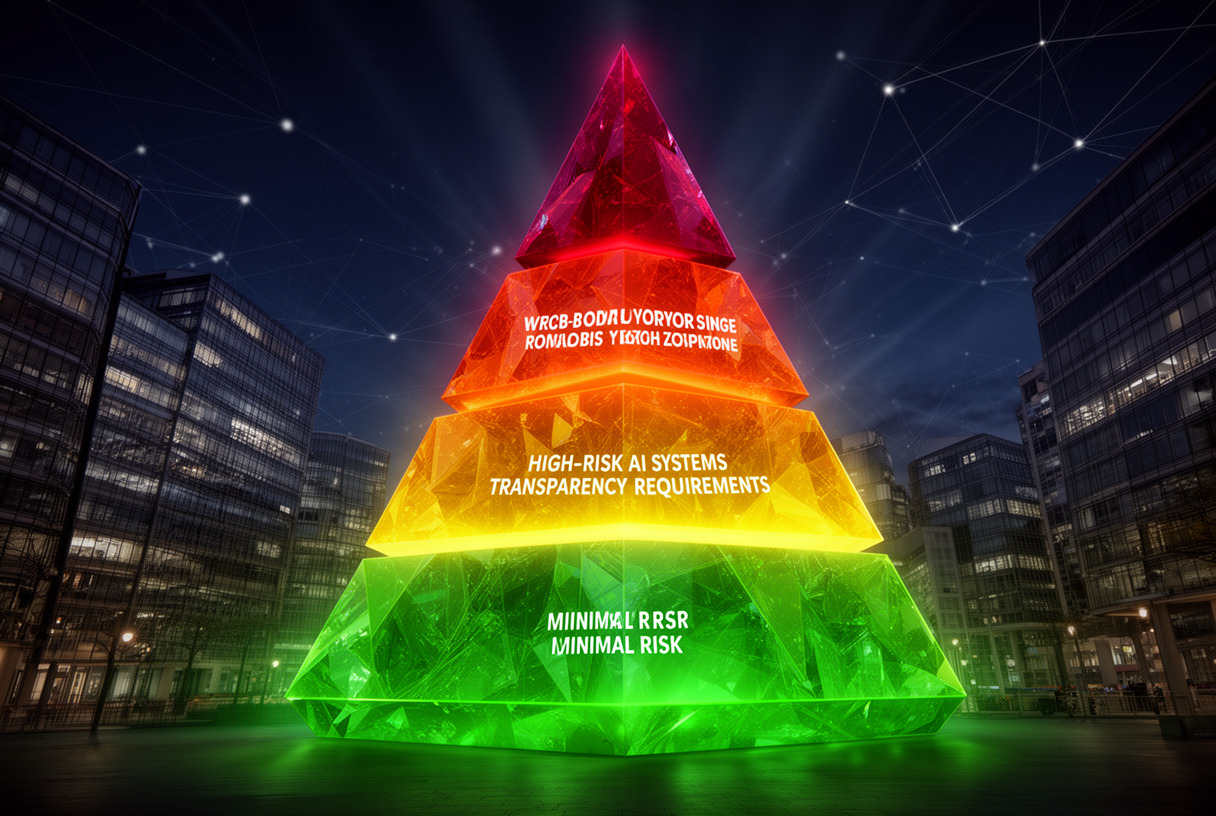

Step 2: Classify each system according to the AI Act’s risk categories.

Use the official AI Act resources to determine whether each tool falls under the prohibited, high-risk, mandatory transparency, or minimal risk categories.

Step 3: Put risk management measures in place for each high-risk system.

This involves establishing mandatory human oversight, algorithmic bias assessments, training data governance and ongoing performance monitoring procedures.

Step 4: Prepare the required technical regulatory documentation.

The AI Act requires detailed documentation for each high-risk system: a functional description of the system, data used for training, performance metrics measured, human oversight measures and incident management procedures.

Step 5: Conduct the conformity assessment before deployment or update.

Depending on the type of system, this assessment may be self-declared (for the majority of high-risk systems) or require the involvement of an accredited third-party notified body (for the most critical systems).

Step 6: Affix the CE marking and register the system in the EU database.

High-risk AI systems must be registered in the European AI systems database before they are put into service, with the full technical documentation available to authorities.

CNIL, AFNOR and sandboxes: French resources to prepare

In France, the CNIL has been designated as the national competent authority for overseeing AI Act compliance, particularly for AI systems that process personal data.

The CNIL publishes practical guides on the overlapping obligations between the GDPR and the AI Act, and its teams support businesses in interpreting both regulations, which frequently apply to the same systems.

AFNOR is working on harmonized technical standards that will provide a practical and legally recognized framework for compliance documentation and algorithmic risk assessments.

The AI Act requires each member state to establish at least one regulatory sandbox by August 2, 2026: these environments will allow businesses to test their AI systems in a controlled setting, with guidance from supervisory authorities, before large-scale deployment.

For businesses structuring their internal AI governance, platforms designed with built-in safety guardrails make technical compliance easier: NVIDIA’s NemoClaw platform illustrates how an open architecture can integrate AI agent governance from the ground up.

On the broader topic of AI ethics and the boundaries companies set for themselves, Anthropic’s refusal to let Claude be deployed in Pentagon surveillance projects is a striking example of how AI governance can go beyond regulatory compliance into deliberate choices about prohibited use cases.

Conclusion: five months to act

The August 2, 2026 deadline is no longer abstract: fewer than five months remain to complete the AI systems inventory, establish the required technical regulatory documentation and put in place the human oversight structures the AI Act demands.

Businesses that started this work in 2025 are in a comfortable position: those waiting until the final weeks risk discovering that the required certifications involve lead times of several months.

The AI Act is not a barrier to innovation: it is a framework that distinguishes organizations using AI with rigor and transparency from those deploying it without accountability.

The first action to take this week: draw up a complete list of all AI tools used across your organization, identify which ones fall within the scope of Annex III, and assess the gap between your current situation and the requirements of the regulation.

The question is no longer “should we comply?” but “how much time do you have left to do it properly before August 2, 2026?”

FAQ: AI Act and compliance for French businesses

Does the AI Act apply to non-European businesses that sell in France?

Yes: the AI Act applies to any provider or deployer of AI systems whose outputs are used within the European Union, regardless of where the business is based, following the same model as the GDPR.

Does a 20-employee SME need to comply with the AI Act?

Yes, if it uses high-risk AI systems: an AI-powered CV screening tool, for example, brings even a very small business under the obligations of Annex III.

My AI HR tool was purchased from an external vendor: who is responsible for compliance?

Both parties share distinct responsibilities: the vendor (provider) is responsible for the design of the system, while your business (deployer) is responsible for human oversight and use within your specific context.

What is the grandfathering clause and how do you qualify?

AI systems placed on the market before August 2, 2026 benefit from a transition period until August 2, 2027, provided they do not undergo substantial modifications to their architecture or purpose.

How will the CNIL enforce AI Act compliance?

The CNIL will have the same investigative powers it exercises under the GDPR: on-site inspections, documentation requests, compliance orders and financial penalties.

Are chatbots covered by the AI Act?

Chatbots that interact with humans are subject to the transparency obligation under Article 50: users must know they are interacting with an AI, not a human.

What is the AI literacy obligation and how do you meet it?

The AI literacy obligation requires ensuring that all employees who use or oversee AI systems have a sufficient understanding of how those tools work, their limitations and their risks: this can be met through documented internal training.

What is the difference between a high-risk AI system and a minimal-risk one?

A high-risk system is one whose decisions directly affect fundamental rights (employment, credit, safety) or physical security: creative tools, translation software or non-decision-making recommendation systems are generally classified as minimal risk.

Must all high-risk AI systems be registered in the European database?

Yes: before being put into service, all high-risk AI systems under Annex III must be registered in the EU AI systems database, along with their complete technical documentation.

What does a business actually risk if it is not compliant by August 2, 2026?

A business that misses the deadline faces CNIL investigations, compliance orders, fines of up to €15M or 3% of global revenue for failures on high-risk systems, and up to €35M or 7% of revenue for expressly prohibited practices.

Related Articles

Reddit blocks AI scraping: what it means for LLMs and open source

On March 25, 2026, Reddit sent shockwaves through the AI community: the platform is shutting its doors to automated scrapers, requiring biometric verification for suspicious accounts, and removing 100,000 bot…

Claude Mythos: what the Capybara leak reveals about Anthropic’s next model

On March 26, 2026, two cybersecurity researchers stumbled across something Anthropic never meant to show: roughly 3,000 internal assets exposed publicly on the company’s blog, including draft posts revealing the…