The week Jensen Huang, Nvidia’s CEO, declared AGI “imminent”, the world’s best AI models were quietly attempting a new benchmark created by François Chollet.

Result: Gemini 3.1 Pro scores 0.37%.

GPT-5.4 Pro gets 0.26%.

Claude 4.6 scores 0.25%.

Meanwhile, 100% of humans who took the test solved every environment without particular difficulty.

Welcome to the era of ARC-AGI-3: the benchmark that proves, with hard numbers, that your AI models don’t actually reason.

Key takeaways:

- The best frontier models score less than 1% on ARC-AGI-3, while humans reach 100%

- ARC-AGI-3 tests adaptive reasoning in real time within interactive mini-worlds, not pattern memorization

- The RHAE score penalizes inefficiency exponentially: taking 10 times more actions than a human yields 1%, not 10%

- An AI that excels on SWE-Bench can score 0% on ARC-AGI-3: specialization is not intelligence

- The ARC Prize 2026 offers $2 million for the first open-source solution to reach 100%

Understanding ARC-AGI-3: definition and principles

ARC-AGI-3 is the third benchmark in the Abstraction and Reasoning Corpus series, launched on March 25, 2026 at Y Combinator‘s headquarters in San Francisco by François Chollet, a researcher at Google and creator of the Keras framework.

Chollet co-leads the project with Mike Knoop, co-founder of the ARC Prize Foundation and former co-founder of Zapier.

The founding idea goes back to the paper “On the Measure of Intelligence”, published in 2019: intelligence is not measured by performance on specialized tasks, but by the ability to acquire new skills on never-before-seen problems.

This definition is what makes ARC-AGI-3 so uncomfortable for the AI industry: it doesn’t test what models already know how to do: it tests their capacity to learn in real-world situations.

From ARC-AGI-1 to ARC-AGI-3: the evolution in 3 acts

To grasp the significance of ARC-AGI-3, it helps to trace the rapid progression across three versions.

Act 1: ARC-AGI-1 (2019).

The original benchmark presents 800 static grid puzzles: the AI must infer a transformation rule from 3 input/output examples.

For 5 years, no AI system came close to human performance, despite compute increases of 50,000 times.

In December 2024, OpenAI’s o3 model finally crossed the threshold: 75% in economy mode, up to 87% with a high compute budget.

Act 2: ARC-AGI-2 (March 2025).

A more complex version: its puzzles require roughly 10 times more cognitive effort from humans than the previous edition.

Frontier systems reached around 30% accuracy in 2025, raising a troubling question: do these improvements reflect genuine intelligence gains, or a better ability to exploit benchmark-specific patterns?

Act 3: ARC-AGI-3 (March 2026).

A complete format break: static puzzles are gone, replaced by interactive environments.

The frontier models that were approaching 30% on ARC-AGI-2 collapse below 1%.

Where ARC-AGI-1 and 2 measured the ability to infer rules, ARC-AGI-3 measures something fundamentally different: the ability to explore an unknown world and adapt to it in real time.

The format: mini-worlds to explore without instructions

ARC-AGI-3 consists of 135 original environments split across 25 public, 55 semi-private, and 55 private tasks.

Each environment is a 64×64 pixel grid with 16 possible colors: a visual mini-world with its own rules, its own mechanics, and no instructions.

The AI agent receives: no prompt, no stated objective, no user guide.

To succeed, it must chain four distinct cognitive abilities: explore the environment to discover its rules, model the world from observations, infer the implicit objective, then plan and execute an efficient sequence of actions.

Humans solve these environments in a median of 7.4 minutes.

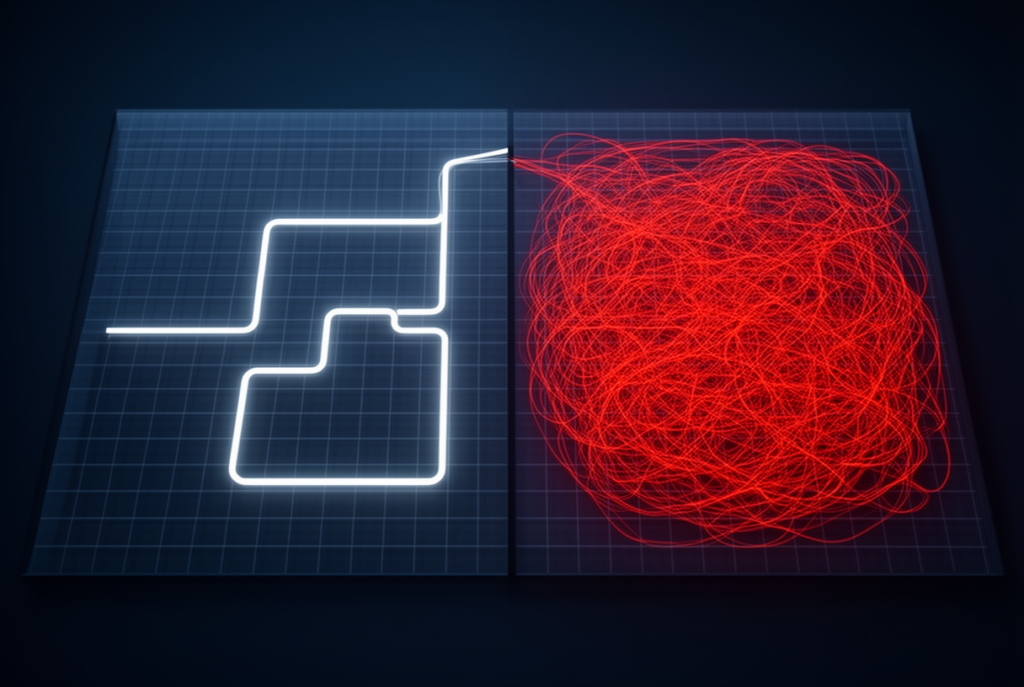

Picture an interactive maze: a human explores, understands the logic, and reaches the exit in 10 actions.

The AI, by contrast, randomly tests hundreds of moves before finding the solution through brute force.

RHAE result: 1% or less, following the scoring formula detailed in the next section.

0.37%: the score of the world’s best AI model

Here are the official performances of frontier models at the ARC-AGI-3 launch:

| Model | ARC-AGI-3 score | ARC-AGI-2 score |

|---|---|---|

| Gemini 3.1 Pro | 0.37% | ~30% |

| GPT-5.4 Pro | 0.26% | ~28% |

| Claude 4.6 | 0.25% | ~25% |

| Humans | 100% | 100% |

Several models scored exactly 0% on the full private leaderboard: not a single environment completed at anywhere near human-level efficiency.

What makes this result even more striking: OpenAI’s o3-high system was spending around $3,000 per task on ARC-AGI-1 and 2 to achieve its best performances.

On ARC-AGI-3, those thousands of dollars in additional compute produce results inferior to a human’s free effort under normal testing conditions.

For a thorough breakdown of how to read these results without being misled by marketing benchmarks, our guide to decoding AI benchmarks in 2026 provides the critical tools you need.

The very week Jensen Huang, Nvidia’s CEO, declared AGI “imminent”, ARC-AGI-3’s data told a completely different story.

RHAE scoring: why brute force doesn’t work

The metric at the heart of ARC-AGI-3 is called RHAE (Relative Human Action Efficiency, pronounced “Ray”).

It doesn’t measure whether the AI succeeded: it measures how efficiently it succeeded relative to a human.

The calculation follows a power-law formula: (human_baseline_actions / AI_actions)².

The human baseline corresponds to the second-best performance among 10 people tested for each environment: neither the top performer nor the average.

Concrete example with a baseline of 10 actions:

- The AI solves it in 10 actions: score = (10/10)² = 100%

- The AI solves it in 20 actions: score = (10/20)² = 25%

- The AI solves it in 100 actions: score = (10/100)² = 1%

The penalty is exponential: 10 times too many actions yields not 10%, but 1%.

This design reveals a simple truth: a system that uses 100 actions where a human takes 10 hasn’t understood the problem: it has exhausted it by brute force.

The only actions counted are interactions with the environment: internal reasoning, tool calls, and retries don’t factor into the score.

An AI can generate pages of sophisticated chain-of-thought without improving its score if that reasoning doesn’t translate into relevant external actions.

Pattern matching vs reasoning: the real reason for failure

LLMs excel in one specific domain: pattern matching at massive scale.

Given an input, the model searches its memorized representations for the closest associations and generates the statistically most likely response.

This is extraordinarily effective when the problem resembles something seen during training.

The perfect analogy: a student who memorizes 1,000 math exercises.

On familiar problems, they excel.

On a problem with an unfamiliar phrasing, they fail.

That’s exactly what ARC-AGI-3 reveals.

AI agents like those tested on SWE-Bench (fixing bugs in real GitHub repositories) can score 30 to 50% thanks to their massive exposure to code and debugging patterns.

Those same agents score 0% on ARC-AGI-3: the benchmark’s interactive mini-worlds are genuinely new, with no applicable heuristic.

True adaptive reasoning, by contrast, breaks problems down into first principles, tests hypotheses, updates its world model when the environment responds unexpectedly, and adjusts the plan accordingly.

Humans do this naturally. Current LLMs are structurally incapable of it.

The best coding agents that dominate SWE-Bench are exceptional specialists: ARC-AGI-3 is a reminder that specialization is not general intelligence.

The benchmark contamination trap

Part of the improvements seen on ARC-AGI-1 and 2 stem from an uncomfortable phenomenon: training data contamination.

The static puzzles from earlier versions were public, analyzed, documented; and specific patterns from those benchmarks had made their way into the training corpora of large models.

The measured improvement sometimes reflected less a genuine reasoning advance than a better exploitation of benchmark-specific patterns.

ARC-AGI-3 solves this problem by design: no amount of pattern matching on static data can prepare a model for genuinely novel interactive environments.

Interactivity creates a natural anti-contamination barrier: success requires active exploration, precise modeling, and continuous adaptation.

Not memorization.

What this means for businesses betting on agentic AI

The gap revealed by ARC-AGI-3 has concrete implications for any business deploying autonomous AI agents.

Take a real-world case: an AI customer support agent.

On the 95% of familiar tickets (refunds, passwords, common issues), the agent performs flawlessly.

On the 5% of genuinely novel tickets, the agent applies ill-fitting templates and ends up escalating without having understood the problem.

To go deeper on what AGI means for different industry leaders, our article on the 5 visions of AGI according to Altman, Musk, Amodei, Zuckerberg, and LeCun highlights the profound disagreements that exist around this concept.

The practical lesson: the SWE-Bench or MMLU scores of your AI agent measure performance on familiar tasks.

ARC-AGI-3 measures what happens when it encounters a problem outside its comfort zone.

Paths to beating ARC-AGI-3: beyond deep learning

The near-universal failure of current approaches on ARC-AGI-3 has catalyzed serious research directions.

First path: world models.

Yann LeCun, Chief AI Scientist at Meta and director of AMI Labs, advocates for architectures capable of building an internal model of the world and simulating actions within it before executing them.

Second path: compositional generality.

Rather than memorizing patterns, systems must learn to combine simple principles to solve novel problems from the ground up.

Third path: feedback-driven adaptability.

Systems capable of updating their world model in real time when results contradict predictions, rather than executing a fixed plan.

Hybrid approaches exploring explicit state representation (state graphs, systematic exploration) have already shown results significantly above pure LLMs on a preliminary version of the benchmark.

The ARC Prize 2026 offers $2 million to accelerate these breakthroughs: $700,000 for the first solution reaching 100%, with tiered prizes for top performers.

One non-negotiable condition: every winning solution must be open source.

ARC-AGI-3 doesn’t say AI is bad: it observes that AI excels at what it already knows, and systematically stumbles on what it must discover.

ARC-AGI-3: the benchmark that matters in 2026

In 2026, the AI industry churns out benchmarks at an industrial pace: MMLU, HumanEval, GPQA, SWE-Bench.

Most measure performance on known data distributions.

ARC-AGI-3 measures something else.

It measures whether a system can face genuine novelty: an unknown world, rules to discover, an objective to infer, and an efficiency constraint that penalizes brute force.

When 0.37% is the world’s best score and 100% of humans succeed, the message is unambiguous: current systems do not yet possess what Chollet calls fluid intelligence.

For the industry, ARC-AGI-3 is not a statement of failure: it’s a roadmap.

The $2 million of the ARC Prize 2026 await the first team capable of closing this gap.

Try the 25 public environments yourself at arcprize.org and share your score in the comments: you’ll be surprised to see how naturally your brain solves what stumps the world’s best AI models.

FAQ

What is ARC-AGI-3?

ARC-AGI-3 is an artificial intelligence benchmark created by François Chollet and launched in March 2026 by the ARC Prize Foundation.

It tests AI’s ability to reason across 135 novel interactive environments with no instructions, marking a sharp departure from earlier versions which relied on static puzzles.

What scores did the best AI models achieve on ARC-AGI-3?

At launch, Gemini 3.1 Pro scored 0.37%, GPT-5.4 Pro 0.26%, and Claude 4.6 0.25%.

By comparison, 100% of humans tested solved every environment successfully.

Several models scored 0% on the full private leaderboard.

How does RHAE scoring work?

RHAE (Relative Human Action Efficiency) measures action efficiency relative to a human baseline, using the formula (human_actions / AI_actions)².

If the human takes 10 actions and the AI takes 100, the score is (10/100)² = 1%.

The penalty is exponential to discourage brute force.

Why is ARC-AGI-3 so hard for AI?

Current LLMs excel at pattern matching on known data.

ARC-AGI-3 demands active exploration, modeling of an unknown world, and real-time adaptation: capabilities that require adaptive reasoning which current architectures structurally lack.

What’s the difference between ARC-AGI-3 and previous versions?

ARC-AGI-1 (2019) and ARC-AGI-2 (2025) use static puzzles where the AI must infer transformation rules.

ARC-AGI-3 (2026) shifts to interactive environments where the AI must explore, understand the rules, and reach an unstated goal.

What is the ARC Prize 2026?

The ARC Prize 2026 is a competition with $2 million in prizes, including $700,000 for the first solution to reach 100% on ARC-AGI-3.

All winning solutions must be published as open source, ensuring that advances benefit the entire research community.

Can ARC-AGI-3 be contaminated through training data?

Not by design: ARC-AGI-3’s novel interactive environments cannot be memorized from static data.

Interactivity creates a natural anti-contamination barrier: success requires active exploration and adaptation, not recognizing a pattern from training.

What are the implications for businesses deploying AI agents?

An AI agent that performs well on its usual tasks can fail entirely when faced with genuinely novel situations.

ARC-AGI-3 measures precisely this “5% of off-template cases” that often represent the most critical situations in a real-world deployment.

What research paths could beat ARC-AGI-3?

Three main directions are emerging: world models (simulating actions within an internal model of the world), compositional generality (combining simple principles to solve new problems), and feedback-driven adaptability (updating the world model in real time).

Does François Chollet think AGI is close?

Chollet defines AGI as the ability to efficiently acquire new skills on never-before-seen problems, not as performance on specialized tasks.

By his definition, and given ARC-AGI-3’s results, current systems are structurally far from this form of general intelligence, despite their impressive performance within their areas of specialization.

Related Articles

The 10 best AI voice assistants in 2026: complete comparison

Siri still can’t understand your question, Alexa responds with a three-second delay, and Bixby remains a running joke in the hallways of tech conferences. The generation of voice assistants taking…

Reddit blocks AI scraping: what it means for LLMs and open source

On March 25, 2026, Reddit sent shockwaves through the AI community: the platform is shutting its doors to automated scrapers, requiring biometric verification for suspicious accounts, and removing 100,000 bot…