What Is GPT-4o?

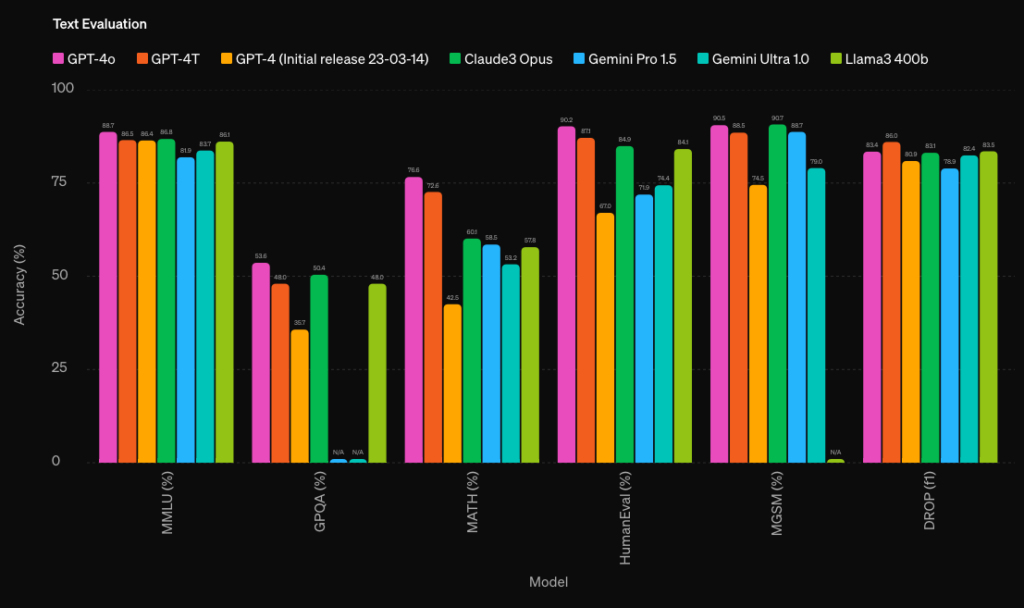

GPT-4o (the “o” stands for “omni”) is OpenAI’s multimodal AI model released in May 2024. It was the first model to natively process and generate text, images, and audio within a single architecture—a major leap from previous models that handled each modality separately. GPT-4o set a new standard for speed, cost-efficiency, and cross-modal reasoning that influenced the entire AI industry.

While GPT-4o has since been succeeded by GPT-5 (August 2025), understanding its capabilities remains relevant: it’s still widely used via API, and its architectural innovations laid the groundwork for everything that followed.

GPT-4o’s Multimodal Capabilities

GPT-4o’s defining feature was its ability to reason across text, vision, and audio simultaneously. You could upload an image, ask questions about it, and receive spoken answers—all processed by the same model with no handoff between separate systems. This meant faster response times (as low as 232ms for audio) and more coherent cross-modal understanding.

For vision tasks, GPT-4o excelled at interpreting spatial relationships in images, analyzing complex diagrams and charts, reading handwritten text, and connecting visual input with written content. For audio, it could understand tone, emotion, and even generate expressive speech with laughter, singing, and varied intonation—capabilities that felt genuinely conversational.

With a 128K token context window, GPT-4o could process roughly 300 pages of text in a single prompt, making it suitable for document analysis, code review, and long-form content tasks. Compared to earlier GPT models, it was 2x faster and 50% cheaper via API.

The GPT-4o Family: Mini and Variants

OpenAI released several variants of GPT-4o to serve different needs. GPT-4o mini, launched in July 2024, offered a lightweight alternative optimized for speed and cost—ideal for high-volume applications like chatbots and data extraction. For a detailed comparison, see our GPT-4o vs. GPT-4o mini comparison.

Throughout 2024 and early 2025, OpenAI released iterative improvements: better instruction following, enhanced creative writing, improved coding capabilities, and more accurate visual analysis. GPT-4o also received fine-tuning support, allowing businesses to customize the model with proprietary data for specialized tasks.

From GPT-4o to GPT-5: The Evolution

In August 2025, OpenAI released GPT-5, representing a fundamental architectural shift. Unlike GPT-4o’s single-model approach, GPT-5 is a hybrid system featuring multiple sub-models (main, mini, thinking, thinking-mini, nano) with a real-time router that selects the optimal variant based on task complexity. This means simple queries get fast, cheap responses while complex reasoning tasks automatically engage more powerful sub-models.

GPT-5 improved significantly on software engineering, cross-language development, and multi-step reasoning compared to GPT-4o. As of March 2026, the latest versions are GPT-5.3 Instant and GPT-5.4 Thinking/Pro, with earlier GPT-5.1 models having been retired. The reasoning capabilities build on the o1 reasoning model lineage that OpenAI introduced in late 2024.

GPT-4o’s Legacy and Current Status

Despite GPT-5’s arrival, GPT-4o remains relevant in 2026 for several reasons. It’s still available via API at very competitive pricing, making it a cost-effective choice for applications that don’t need GPT-5’s advanced reasoning. Many production systems still run on GPT-4o or GPT-4o mini, particularly for tasks like content generation, translation, summarization, and basic image analysis where it performs excellently.

GPT-4o also remains the foundation for model distillation—using GPT-5 as a “teacher” to fine-tune GPT-4o mini for specific tasks, achieving near-GPT-5 quality at a fraction of the cost. This approach has become a standard practice for companies optimizing their AI infrastructure.

Choosing the Right Model in 2026

The OpenAI model landscape in 2026 offers options for every need. GPT-4o mini remains the go-to for high-volume, cost-sensitive applications. GPT-4o serves as a reliable mid-tier option. GPT-5.3 Instant handles most general tasks with improved quality, while GPT-5.4 Thinking and Pro tackle complex reasoning and specialized domains.

For a deeper understanding of how these models compare with previous generations, check out our guides on GPT-4 Turbo and the evolution of OpenAI’s model lineup. The pace of improvement shows no signs of slowing, with each generation bringing meaningful advances in reasoning, multimodality, and efficiency.

Related Articles

Obsidian Web Clipper: official plugin review for capturing the web in Markdown

Obsidian Web Clipper was released in a stable version just over a year ago, and the real question for an existing Obsidian user isn’t about marketing: should you stop using…

AI plugins for Obsidian 2026: complete comparison (Smart Connections, Copilot, Text Generator, AI Tagger, Companion, CAO)

Choosing among the AI plugins for Obsidian in 2026 means deciding between seven community tools that cater to different needs. Obsidian still doesn’t include any native artificial intelligence: everything is…