GameNGen: The Neural Network That Runs DOOM Without a Game Engine

In August 2024, Google Research unveiled GameNGen, a revolutionary neural model capable of generating real-time gameplay for the classic FPS DOOM—without relying on a traditional game engine. Built on a modified Stable Diffusion architecture, GameNGen produces playable frames at 20 FPS on a single TPU, predicting each new frame based on player inputs and previous frames.

This achievement marked a paradigm shift: for the first time, a diffusion model could simulate a complex interactive environment in real time, handling turning, strafing, shooting, enemy AI behavior, and damage mechanics with remarkable visual fidelity. Human evaluators struggled to distinguish short clips of GameNGen from actual DOOM gameplay.

How GameNGen Works: Training and Architecture

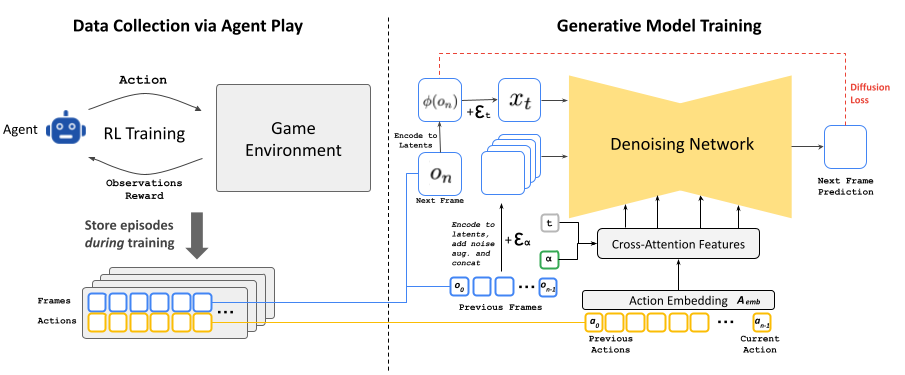

GameNGen’s pipeline involves two phases. First, a reinforcement learning agent learns to play DOOM at various difficulty levels, generating diverse gameplay trajectories. This agent explores different strategies, weapons, and map areas to produce a comprehensive training dataset covering the full range of possible game states.

In the second phase, a modified Stable Diffusion 1.4 model is fine-tuned on these trajectories. Rather than conditioning on a single image, the model receives an extended sequence of past frames along with the corresponding player inputs. To combat autoregressive drift—where errors compound over time—the researchers apply Gaussian noise augmentation to context frames during training, significantly improving long-horizon stability.

The result is a neural network that effectively acts as a game engine: it receives controller input, maintains an internal representation of the game state, and outputs the next visual frame. No game logic, physics engine, or rendering pipeline is required—it’s learned entirely from data.

From GameNGen to AI World Models in 2026

GameNGen was a proof of concept, but it opened the floodgates for a new category of AI systems: neural world models for interactive environments. By early 2026, several major projects have pushed this frontier much further.

Google Genie 3 and Project Genie

Google DeepMind’s Genie series represents the most ambitious evolution of the concept. Genie 2 (December 2024) demonstrated the ability to generate interactive 3D scenes from a single image and text description, including first-person and third-person perspectives. Genie 3, announced in August 2025 and launched publicly in January 2026, takes this further by generating diverse interactive environments from text, images, or sketches. Trained on over 30,000 hours of gameplay footage, Genie 3 predicts what happens next in real time based on user actions, effectively creating playable worlds on the fly.

Oasis by Decart: AI-Generated Minecraft

Oasis, developed by Decart, reimagines Minecraft entirely through real-time AI generation. Every frame is produced by a world model trained on millions of hours of Minecraft footage. The system takes keyboard input and generates physics-based gameplay in real time—players can move, jump, break blocks, manage inventory, and interact with the world. Oasis demonstrated that AI world models could handle complex sandbox-style gameplay, not just linear corridor shooters.

DIAMOND and Other Research Models

Microsoft Research’s DIAMOND (Diffusion for World Modeling) and various academic projects have explored applying diffusion-based world models to games like Counter-Strike, Atari classics, and driving simulations. These models continue to improve in visual quality, consistency, and the ability to maintain coherent game states over longer play sessions.

Challenges and Limitations in 2026

Despite impressive progress, AI game engines still face significant technical hurdles. Visual hallucinations remain an issue: objects can appear or disappear unexpectedly, and textures may shift after extended play sessions. Memory and state consistency are difficult—neural models struggle to maintain a coherent game world over long periods, unlike traditional engines that track state explicitly.

Computational costs are another barrier. While GameNGen ran on a single TPU for DOOM (a 1993 game), generating frames for modern graphically intensive titles requires orders of magnitude more compute. Latency, resolution, and frame rate remain far below what conventional engines deliver for AAA games.

There are also fundamental questions about game design. Traditional engines allow developers precise control over mechanics, physics, and world rules. Neural engines generate plausible outputs but cannot guarantee deterministic behavior—a challenge for competitive multiplayer or games requiring exact physics.

The Future of AI in Game Development

The most likely near-term impact of neural game engines is not replacing traditional engines but augmenting them. AI world models could revolutionize procedural content generation—creating environments, textures, NPC behavior, and level layouts that feel handcrafted but are generated on the fly. This could dramatically reduce development time and costs, particularly for indie studios.

For rapid prototyping, AI engines could allow designers to describe a game concept in natural language and immediately see a playable prototype. Google’s Project Genie already demonstrates this capability for simple 2D platformer-style games, and the technology is advancing rapidly toward more complex genres.

The gaming industry—valued at over $200 billion globally—stands at the beginning of a transformation. From GameNGen’s proof that a neural network could run DOOM to Genie 3’s text-to-interactive-world capabilities, the trajectory is clear: AI will become an increasingly central tool in how games are created, experienced, and evolved.

To explore more AI tools transforming creative industries, check out our guide to AI image generators.

Related Articles

Obsidian Web Clipper: official plugin review for capturing the web in Markdown

Obsidian Web Clipper was released in a stable version just over a year ago, and the real question for an existing Obsidian user isn’t about marketing: should you stop using…

AI plugins for Obsidian 2026: complete comparison (Smart Connections, Copilot, Text Generator, AI Tagger, Companion, CAO)

Choosing among the AI plugins for Obsidian in 2026 means deciding between seven community tools that cater to different needs. Obsidian still doesn’t include any native artificial intelligence: everything is…