On December 24, 2025, while the tech industry was blowing out its Christmas candles, Nvidia announced the acquisition of Groq for $20 billion.

That figure deserves a pause: back in September 2025, Groq had just closed its Series D at a $6.9 billion valuation.

Three months later, Nvidia paid 2.9 times that amount to get its hands on the startup specializing in ultra-fast inference.

This isn’t simply a big check: it’s a signal that the AI battle is now being fought on the terrain of inference, and that the dominant players know exactly what they’re buying.

Key takeaways:

- Nvidia paid $20B for Groq, 2.9x its $6.9B valuation just three months prior

- The deal is structured as a non-exclusive license + acqui-hire to sidestep antitrust regulators

- The Groq 3 LPX delivers 35x more tokens/s/MW than the Blackwell NVL72, thanks to a radically different SRAM architecture

- GroqCloud currently charges $0.59/M tokens versus $2.50/M for GPT-4o: Vera Rubin promises another 10x reduction

- The AI inference market is set to grow from $106B to $255B between 2025 and 2030

- Warren, Wyden, and the FTC are scrutinizing the deal: Europe is also starting to question its dependence on Nvidia

What Nvidia actually bought

A non-exclusive license, not a classic acquisition

The deal structure is surprising at first glance: Nvidia did not acquire Groq outright.

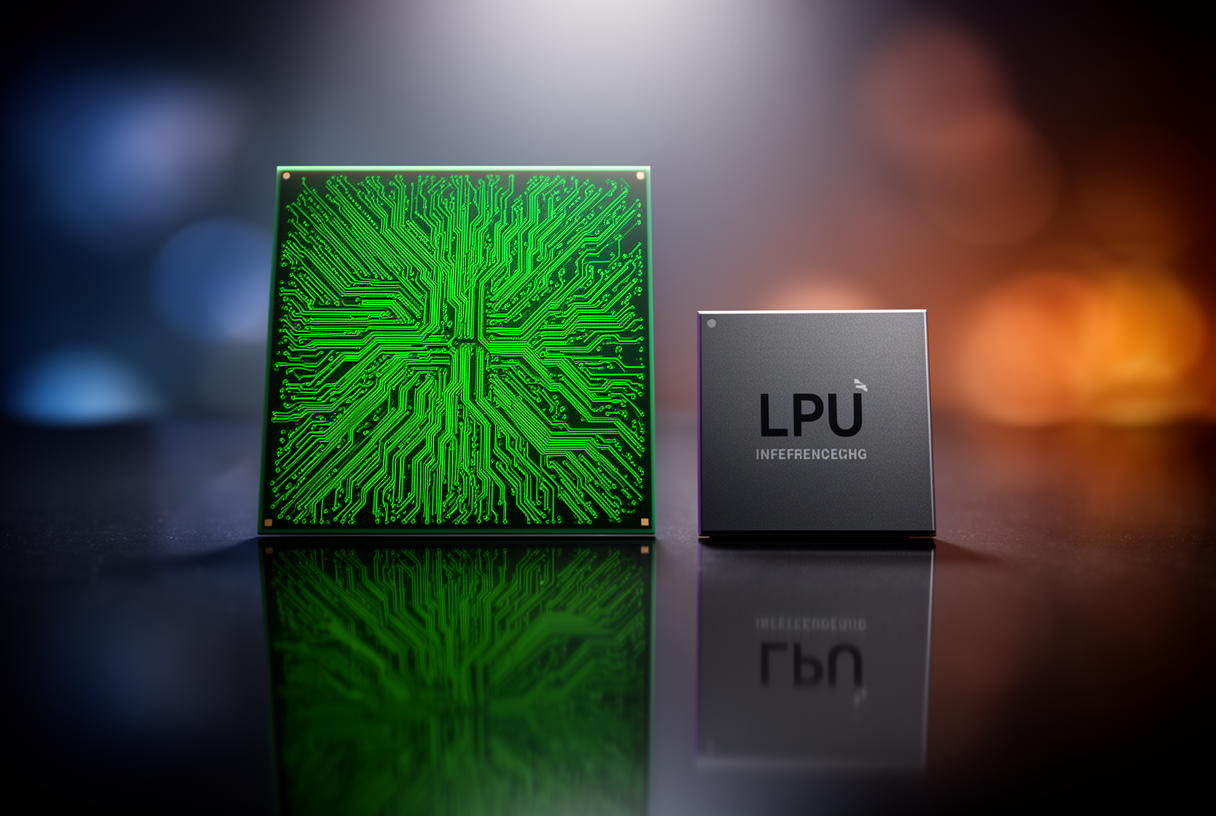

The contract takes the form of a non-exclusive license on Groq’s LPU (Language Processing Unit) technology, paired with an acqui-hire of key founders.

This structure is no accident: with 85 to 90% market share in AI training accelerators, Nvidia knew a direct acquisition would immediately trigger an antitrust investigation from the FTC and DOJ.

The “non-exclusive license” structure is a transparent legal fiction: Groq retains its legal existence, but the operational reality looks every bit like a full acquisition.

The precedent is instructive: in 2020, Nvidia attempted to acquire ARM Holdings for $40 billion and watched the deal get blocked by regulators after two years of proceedings.

The acqui-hire: where the real value lies

The second component of the deal is the acqui-hire: Jonathan Ross (founder and former CTO) and Sunny Madra (president) are joining Nvidia.

Ross is the architect of the LPU chip, the mind behind an approach that challenges thirty years of GPU dominance.

Without its original designers, Groq loses its ability to evolve the architecture into future generations: the real value isn’t in the patents, it’s in the future R&D trajectory, now housed within Nvidia.

Jensen Huang reportedly made his decision in under three weeks after being presented with the following analogy: training a model is like hauling cargo with an 18-wheeler.

Running inference is like doing last-mile delivery by bike: efficient, targeted, and incomparably cheaper per trip.

The Groq 3 LPX explained simply

LPU vs GPU: a radically different philosophy

A GPU like Nvidia’s Blackwell NVL72 is built to process as many calculations in parallel as possible: thousands of cores, a complex cache hierarchy, and a dynamic scheduler that decides in real time which task to run and when.

That flexibility is an asset for model training, but it introduces inherent overhead the moment you shift to sequential inference.

The Groq LPU takes the opposite approach: the compiler decides in advance, down to the clock cycle, what each circuit will do and when data will arrive.

The result: zero wait stalls, zero unpredictable branch divergence, zero cache misses.

On token-by-token decoding workloads, this deterministic execution turns a microscopic architectural difference into a latency advantage measurable in hundreds of milliseconds per request.

SRAM architecture: 512 MB per chip, 256 accelerators per rack

The most distinctive feature of the Groq 3 LPU is its memory: each chip has 512 MB of high-speed SRAM used as primary working storage, where GPUs rely on external HBM.

The latency gap is stark: 1 nanosecond to access SRAM, versus 50 to 100 nanoseconds for HBM.

The Groq 3 LPX system assembles 256 of these interconnected LPUs in a single rack, for an aggregate SRAM capacity of 128 GB and a global memory bandwidth of 40 petabytes per second.

Each LPU connects via 96 C2C links at 112 Gbps, enabling deterministic synchronization of all 256 units as if they formed a single core.

Cerebras, Groq’s direct competitor, pushes this logic further with 6x more SRAM, but on a wafer-scale architecture that remains difficult to manufacture at scale.

Prefill GPU + decode LPU: the winning combination

The prefill phase processes the entire input prompt (sometimes 50,000 tokens for a code analysis), a compute-intensive operation that can tolerate moderate latency.

The decode phase generates response tokens one by one, autoregressively: each token depends on the previous ones, parallelization is impossible, and the latency users feel depends directly on this speed.

Nvidia’s heterogeneous Vera Rubin platform plays into this duality: Rubin GPUs handle the compute-heavy prefill, while Groq 3 LPUs in the LPX rack take over the memory-bound decode.

Nvidia’s Dynamo orchestrator automatically routes tasks to the right hardware based on batch size and request characteristics.

The claimed result: 35x more throughput per megawatt for interactive inference tasks, compared to the Blackwell NVL72 system.

This figure applies to trillion-parameter models in interactive contexts: real-world results will vary depending on model size and prefill/decode ratio, and commercial availability of the Groq 3 LPX is still to be confirmed for 2026.

For the first time, an inference platform no longer has to choose between high throughput and low latency: Vera Rubin optimizes both phases separately on the hardware best suited to each.

Price impact: inference 10x cheaper

GroqCloud pricing vs. the market

GroqCloud, Groq’s cloud platform run by Simon Edwards since the acquisition, now offers pricing that is resetting expectations across the industry.

Llama 3.3 70B on GroqCloud costs $0.59/million input tokens and $0.79/M output tokens, averaging around $0.69/M tokens.

GPT-4o charges $2.50/M input tokens and $10.00/M output tokens: the gap reaches a factor of 18x in raw cost per token for comparable performance.

These gaps reflect a structural reality: LPUs produce more tokens per watt, which mechanically reduces operating costs.

Vera Rubin promises another 10x reduction

Nvidia is targeting $45/M tokens with Vera Rubin + LPX in production for trillion-parameter models, representing roughly 10x cheaper than current Blackwell pricing for those models.

For 70B-parameter models, projections of 10x lower cost versus Blackwell suggest a range of $0.10 to $0.20/M tokens, competitive with the best prices on the market today.

The AI inference market was valued at $106 billion in 2025: projections for 2030 reach $255 billion, a CAGR of 19.2%.

Concrete calculation: your AI agent today vs. tomorrow

Take a real case: a company handling 100,000 customer support tickets per month via a GPT-4o-based AI agent.

Each ticket averages 2,000 input tokens and 500 output tokens: that’s 250 million tokens per month.

At current GPT-4o pricing ($2.50/M input + $10/M output), the monthly bill comes to $5,625: $625 for input, $5,000 for output.

On GroqCloud with Llama 3.3 70B, the same volume drops to $670 per month: a saving of 88%.

With Vera Rubin at 10x less than Blackwell, that bill could fall below $200 per month for the same volume.

At that cost level, use cases that weren’t profitable to run at scale become viable: proactive follow-up agents, systematic analysis of every ticket, automated summaries for each interaction.

Cheap inference doesn’t shrink budgets: it multiplies the use cases that existing budgets can fund.

The competitors’ response

The Groq acquisition accelerates a trend the hyperscalers had already anticipated: reducing their dependence on Nvidia GPUs by building their own chips.

AMD is betting on GPU architecture with its MI350 series (35x inference improvement vs. previous generation) and is preparing the MI400 in 2026 on a 2nm process with 432 GB of HBM4.

Google continues evolving its TPUs with Ironwood (7th generation), capable of synchronizing up to 9,216 units via optical switching, though access remains limited to Google Cloud.

Microsoft is integrating its Maia 100 accelerator (TSMC 3nm, 10 PFLOPS FP4, 216 GB HBM3e) alongside Blackwell GPUs in Azure, an openly multi-vendor strategy.

Amazon is pushing forward with Trainium3 and Inferentia, already used internally to reduce costs for AWS Bedrock.

The common challenge: none of these chips benefit from the CUDA ecosystem, which remains Nvidia’s most difficult competitive advantage to replicate.

For more on Nvidia’s announcements from this period, our analysis of the GTC 2026 conference and the Feynman and OpenClaw agents lays out the full strategic context.

Antitrust and sovereignty: the real questions

In February 2026, Senators Elizabeth Warren and Ron Wyden sent a formal letter to Jensen Huang, questioning the antitrust structure of the transaction.

Their argument: a company controlling 85 to 90% of AI training accelerators that acquires the most promising inference technology on the market through a legal fiction of a license constitutes an effective consolidation of the AI value chain.

The parallel with the $40B ARM acquisition attempt in 2020 is explicit in their letter: had the transaction succeeded, Nvidia would have controlled the base architecture used by all its competitors.

The European Commission opened a preliminary review of Nvidia’s datacenter partnerships in November 2025, before the Groq announcement even came.

The question of digital sovereignty becomes concrete: if tomorrow’s global AI inference runs through LPU infrastructure controlled by Nvidia, French and European companies will depend on an American commercial decision to maintain access to their own AI agents.

Mistral, which has just reinforced its sovereignty positioning, embodies exactly this alternative: a European inference chain cannot operate at scale without access to compute infrastructure that doesn’t require going through a single American gatekeeper.

Knowing how to read chip performance in published benchmarks allows you to separate marketing claims from real gains: our guide to reading AI benchmarks without getting misled gives you the tools to evaluate Nvidia and Groq’s numbers in context.

What this means for you

It redraws the economics of generative AI over the next 24 to 36 months.

Inference costs will keep falling structurally: players who built their business model on high margins tied to token costs will face growing pressure.

Players who have invested in AI agent workflows will see their operating costs drop, making profitable automations that weren’t viable at $2.50/M tokens.

The constraint that will slow the acceleration is no longer price: it will be data quality, orchestration reliability, and governance of automated decisions.

If you’re looking to quantify the savings available on your own inference workloads, contact us to audit your costs and identify possible migrations to the new architectures.

FAQ

Why did Nvidia pay $20B when Groq was valued at $6.9B just 90 days earlier?

The 2.9x premium reflects the strategic value of eliminating a technology competitor before it could capture the inference market: Nvidia chose to pay a high price quickly rather than let Groq take that market.

What is an LPU and how is it different from a GPU?

An LPU (Language Processing Unit) is designed exclusively for sequential inference: the compiler schedules every operation in advance, eliminating the dynamic wait times of GPUs and enabling much faster, more predictable token generation.

Is the Groq 3 LPX commercially available today?

Commercial availability of the Groq 3 LPX integrated into Vera Rubin is still to be confirmed: the announced performance figures (35x tokens/s/MW) are projections for datacenter deployments that Nvidia is preparing for 2026, with a gradual rollout.

What is the heterogeneous Vera Rubin platform?

Vera Rubin is Nvidia’s next-generation inference platform combining Rubin GPUs for the prefill (prompt processing) and Groq 3 LPUs for the decode (token generation), with each phase handled by the hardware best suited for it.

What does inference on GroqCloud actually cost?

Llama 3.3 70B on GroqCloud costs $0.59/M input tokens and $0.79/M output tokens, making it 18 times cheaper than GPT-4o for a similar level of performance on standard tasks.

Did Warren and Wyden block the deal?

No: their February 2026 letter is a political challenge with no direct power to block the deal.

The FTC and DOJ are conducting separate investigations, but the non-exclusive license structure reduces the legal surface area for a traditional antitrust challenge.

Is Cerebras a better alternative to Groq?

Cerebras has 6 times more SRAM than Groq’s LPU, which gives it a theoretical edge on very large models, but its wafer-scale architecture remains harder to manufacture and scale in a datacenter than Groq’s modular approach.

Should French companies be concerned about the Nvidia-Groq consolidation?

The growing concentration of AI inference infrastructure within a single American platform raises a real dependency question: a credible sovereign alternative requires both open models (Mistral, Llama) and access to compute infrastructure not controlled by any single player.

Can AMD or the hyperscalers’ in-house chips counter Nvidia after Groq?

AMD is making progress with the MI400 in 2026, and the hyperscalers are investing in Trainium, Maia, and TPUs, but none yet match Nvidia’s CUDA software ecosystem or the GPU/LPU combination that Vera Rubin brings to interactive inference.

Will inference costs drop by 10x?

Nvidia’s 10x reduction projections over Blackwell are credible for very large models and large-scale deployments, but the actual reduction will depend on the model used, the prefill/decode ratio of your workloads, and the real commercial availability timeline for the Groq 3 LPX.

Related Articles

Reddit blocks AI scraping: what it means for LLMs and open source

On March 25, 2026, Reddit sent shockwaves through the AI community: the platform is shutting its doors to automated scrapers, requiring biometric verification for suspicious accounts, and removing 100,000 bot…

Claude Mythos: what the Capybara leak reveals about Anthropic’s next model

On March 26, 2026, two cybersecurity researchers stumbled across something Anthropic never meant to show: roughly 3,000 internal assets exposed publicly on the company’s blog, including draft posts revealing the…