What Is Apple Intelligence?

Apple Intelligence is Apple’s personal AI system built directly into iPhone, iPad, and Mac. Launched with iOS 18.1 in October 2024, it runs on Apple Foundation Models—language models optimized for on-device processing with Apple Silicon chips, using Private Cloud Compute only when more complex tasks require it.

Apple’s approach stands out for its uncompromising focus on privacy: AI processing happens locally on your device whenever possible, without sending personal data to external servers. When cloud processing is needed, data is encrypted and never stored by Apple.

Key Apple Intelligence Features in 2026

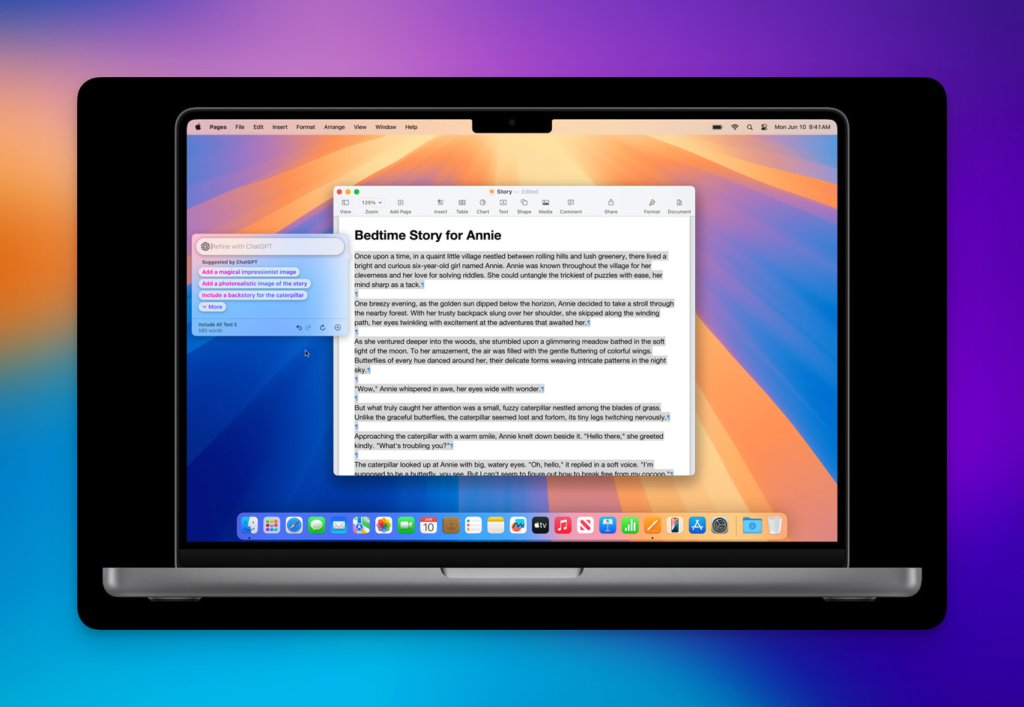

Writing Tools

Writing Tools are available everywhere you type across iOS, iPadOS, and macOS, including third-party apps. They can proofread text, rewrite it in different tones (professional, concise, friendly), and summarize long content with a single tap. Since iOS 18.4, a “Describe Your Change” option lets you give natural language instructions to transform text exactly how you want.

Next-Generation Siri

Siri has been fundamentally redesigned with Apple Intelligence. The assistant now understands natural language far more fluidly, maintains context across multiple requests, and handles hesitations and rephrasing gracefully. You can speak or type to Siri, and it can access ChatGPT from OpenAI for questions requiring broader knowledge—with user confirmation before sharing any data.

For 2026, Apple is preparing a major overhaul of Siri’s architecture, internally dubbed “LLM Siri.” Based on a partnership with Google using Gemini models, this new version is expected to be announced at WWDC in June 2026 and rolled out with iOS 19.4. The goal: a truly conversational Siri capable of executing complex multi-app actions.

Image Generation and Genmoji

Image Playground creates playful images directly in Messages, Freeform, or through a dedicated app, with Animation, Illustration, and Sketch styles. Image Wand transforms rough sketches into polished visuals or generates illustrations from surrounding context. Genmoji lets you create custom emoji by simply describing what you want—a concept unique to Apple that works anywhere emoji are supported.

Visual Intelligence

On iPhone 16 and later, Visual Intelligence uses the camera to analyze your surroundings in real time. Point your camera at a restaurant to see reviews, at text to translate it instantly, at a product to find where to buy it. The system can also copy detected text, identify phone numbers, and search for similar items on the web.

Priority Notifications and Summaries

Apple Intelligence analyzes your notifications and surfaces the most important ones at the top. Long emails and messages are automatically summarized so you can see the essentials at a glance. Smart Replies suggest contextual responses in Mail and Messages, dramatically speeding up daily communication management.

Compatibility and Availability

Apple Intelligence requires recent Apple Silicon: iPhone 15 Pro or later, iPad with M1 chip or later, Mac with M1 chip or later. The system is available in numerous languages since late 2025, including French, German, Spanish, Japanese, Korean, Chinese, and Portuguese.

For developers, Apple introduced the Foundation Models framework at WWDC 2025, providing free access to Apple Intelligence’s on-device language models (approximately 3 billion parameters) with no internet dependency—a significant advantage for apps requiring local AI processing.

Apple Intelligence vs. the Competition

Compared to Google Gemini (integrated into Android and soon into Siri itself) and OpenAI’s ChatGPT, Apple Intelligence takes a different approach. While Google and OpenAI rely on powerful cloud models, Apple prioritizes on-device processing and privacy. The trade-off: some features are less advanced than competitors, particularly for long-form content generation and complex reasoning.

However, the deep integration across Apple’s ecosystem—understanding your contacts, calendar, emails, photos, and habits without any of that data leaving your device—offers a unique contextual advantage. This privacy-respecting personalization is Apple Intelligence’s core value proposition in 2026.

Looking Ahead: 2026 and Beyond

The Apple-Google partnership to integrate Gemini into Siri marks a strategic turning point. Apple acknowledges it needs more powerful language models to compete with next-generation conversational AI assistants. iOS 19 should bring a Siri that understands on-screen context, chains complex cross-app actions, and truly functions as a personal AI agent.

Apple Intelligence continues to evolve rapidly, with regular updates expanding AI capabilities across the ecosystem. For Apple users, it’s another reason to stay in the ecosystem—and for others, a compelling reason to consider joining it.

Related Articles

Obsidian Web Clipper: official plugin review for capturing the web in Markdown

Obsidian Web Clipper was released in a stable version just over a year ago, and the real question for an existing Obsidian user isn’t about marketing: should you stop using…

AI plugins for Obsidian 2026: complete comparison (Smart Connections, Copilot, Text Generator, AI Tagger, Companion, CAO)

Choosing among the AI plugins for Obsidian in 2026 means deciding between seven community tools that cater to different needs. Obsidian still doesn’t include any native artificial intelligence: everything is…