You’re watching a video: a politician announces his resignation, a CEO urgently requests a wire transfer from his team, a celebrity endorses a dubious financial scheme.

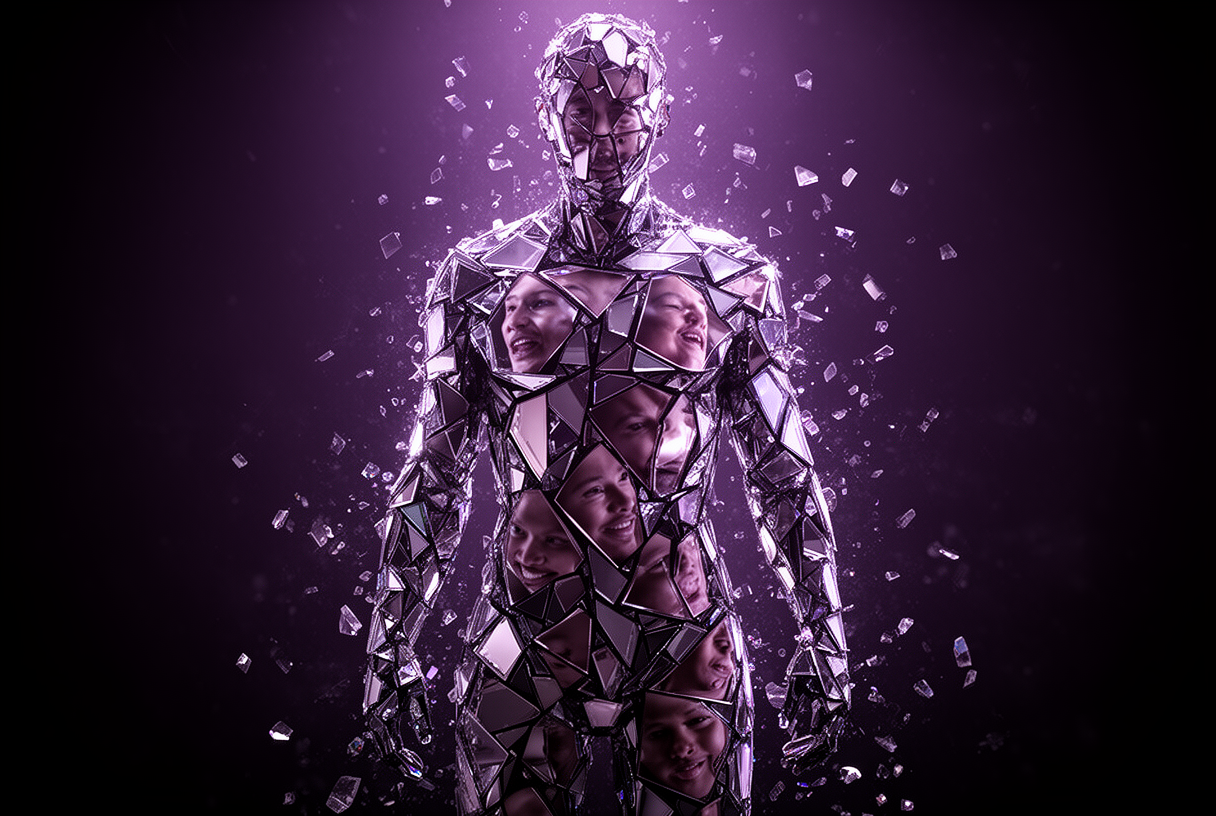

What you see doesn’t exist: the face and voice are entirely generated by AI, and your brain is completely convinced.

Deepfakes fuel multi-million dollar fraud, sway elections and destroy reputations: this guide gives you the keys to understand the threat, spot the visual cues, and protect yourself effectively.

Key takeaways:

- Humans detect deepfakes with only 52 to 68% accuracy, barely above chance

- Reality Defender, Sensity AI and Intel FakeCatcher achieve 70 to 96% in production, but remain imperfect against adversarial deepfakes

- CFO fraud via video deepfake cost $25 million to a Hong Kong company in 2024

- The European AI Act requires transparency on synthetic content starting August 2026

- Hands, teeth and facial contours remain the most reliable visual cues without a specialized tool

- The best defense: verify the source before analyzing the content

How deepfakes work in 2026

Deepfake technology has historically relied on generative adversarial networks (GANs): two neural networks locked in a continuous loop, one creating synthetic content, the other trying to detect it.

In 2026, GANs have largely given way to diffusion models, an approach that builds content through progressive refinement and generates 4K video at 50 frames per second with native audio sync, in seconds on a consumer PC.

The technical barrier has nearly vanished: apps like DeepFaceLab offer interfaces as simple as a social media filter, with no coding skills required.

For voice, platforms like Fish Audio or Resemble AI clone a vocal tone from just 10 to 30 seconds of recording, compared to a minimum of 60 seconds two years ago.

The three steps behind a deepfake

Creation follows a structured three-step process: data collection (photos, videos and audio recordings of the target, pulled from social networks), model training (the AI learns to reproduce the face, voice and expressions), then rendering (overlaying the model onto new footage or generating the content from scratch).

Tools like OmniHuman illustrate the state of the art: converting any photo into an animated video sequence with fully synthetic body and facial movements, with an unsettling level of coherence.

A convincing deepfake now takes less time to create than writing a fraudulent email: the threat is no longer in technical sophistication, it’s in total accessibility to anyone.

Detection in 2026: the real performance picture

The figures published by detection tool vendors are often flattering: real-world performance tells a very different story.

According to independent evaluations from 2025, a model showing 96% accuracy in the lab can drop to 63% against real adversarial deepfakes, accuracy worse than a coin flip.

For audio deepfakes, the gap is even more severe: some models drop from perfect lab accuracy to 43% against real adversarial audio content.

Humans detect deepfakes with 52 to 68% accuracy depending on the media type, and a 2025 study found that only 0.1% of participants tested against mixed content are genuinely reliable.

Benchmark of the five major detection tools

Reality Defender is one of the few multimodal solutions: it simultaneously analyzes visual artifacts, acoustic patterns, metadata and audio-video inconsistencies, with 70 to 85% accuracy in production and confidence scores by analyzed zone.

Sensity AI focuses on forensics with 98% claimed accuracy on public datasets and reports adapted for judicial proceedings, but its analysis time makes it unsuitable for real-time detection.

Microsoft Video Authenticator analyzes each frame of a video and returns a probability score for manipulation per facial zone, with each suspect zone visually flagged for verification teams.

Intel FakeCatcher takes a radically different approach: it detects variations in blood flow within facial pixels, a biological signal that’s difficult to fake, achieving 96% accuracy in the lab.

Pindrop Pulse, specialized in audio, reaches 99% accuracy by combining voice detection with its global authentication system, trained on more than 20 million samples covering 370 different speech synthesis systems.

The most recent adversarial systems bypass detectors in 78 to 99% of cases when specifically designed to do so: the race between generation and detection is not being won by the defenders.

Documented real-world cases

Deepfakes have moved beyond the theoretical stage: their impact spans large-scale financial fraud, organized political manipulation and targeted harassment.

CFO fraud: $25 million lost in Hong Kong

In February 2024, an employee at a Hong Kong-based multinational authorized a $25 million wire transfer after joining a deepfake video call involving several executives simultaneously, all entirely synthetic.

This case marks a major qualitative leap: attackers are no longer content with a single deepfake participant. They now build entire meetings of synthetic people.

Back in 2019, a British company had already lost €220,000 after a deepfake voice call impersonating the CEO with enough fidelity to convince the subsidiary director to execute the transfer.

Elections and organized disinformation

During the 2024 New Hampshire Democratic primary, voters received deepfake robocalls impersonating Biden explicitly asking them not to vote.

In Ireland, during the 2025 presidential election, a deepfake video of the winning candidate announcing his withdrawal circulated 48 hours before the vote, accompanied by purported confirmations from national journalists who were themselves deepfaked.

AI-generated political content spreads 90% faster on social media than human-created content, according to analyses of the 2024 US election cycle.

The 2026 French municipal campaigns illustrate an additional tactic: accusing a genuine compromising video of being a deepfake, without even needing to produce the fake.

How to spot a deepfake without a specialized tool

Several areas of the body remain persistent weak points for image and video generators in 2026.

Hands and fingers are the most reliable cues: fused digits, more than five fingers, anatomically impossible curvature, or an abnormal skin texture at the joints.

Teeth often form a uniform, smooth white block, lacking the natural variation in color, spacing and irregularity of real human teeth.

Facial contours still give away many deepfakes: unusual blurring at the edge between skin and hair, distortion during profile rotations, and ears that disappear or deform at 90 degrees.

Eyes and blinking remain a reliable marker: humans blink every 2 to 10 seconds with subtle muscular contractions around the eyes, while deepfakes blink mechanically or not at all.

In video, micro-expressions are costly to simulate: a deepfake face often looks slightly rigid, with facial emotions fractionally out of sync with the voice.

For audio: a cloned voice often lacks natural emotional variation, breaths fall at the wrong syntactic moment, and the sound environment may be inconsistent with the visual setting.

Reverse image search via Google Images, TinEye or Yandex lets you verify whether a supposedly fresh image is actually months old, immediately undermining the claimed context.

Effective protection strategies

The most effective rule for individuals: never make an urgent decision based solely on a video or voice call, regardless of how polished the image or sound appears.

If a manager or someone close to you requests a sensitive action (a wire transfer, access rights, a quick decision), calling back on a number verified independently is the reflex to build.

Solutions like World ID represent one of the emerging responses for proving that a contact is a real human being, in a context where synthetic identities are becoming indistinguishable.

For companies, mandatory multi-channel verification is the most effective measure: any request involving a financial transaction or sensitive access must be confirmed via an independent secondary channel.

Pre-agreed code words between trusted parties are a simple and remarkably effective measure: a word or phrase shared exclusively between them, impossible to reproduce without prior compromise.

Limiting the public exposure of your face, voice and photos on social networks reduces the training data available to create a deepfake: a priority precaution for children’s photos.

The best defense against deepfakes is not technological: it’s the deliberate introduction of friction before any urgent action based on video or audio content.

Regulation in 2026: what’s actually changing

The European AI Act, applicable from 2 August 2026, imposes explicit transparency obligations: Article 50 requires AI system outputs to be marked in machine-readable format and users to be informed when content is artificially generated.

This regulation only covers legal deepfakes: illegal content (non-consensual pornography, defamation, fraud) falls under other existing legal frameworks.

In France, the CNIL has clarified that deepfake practices already constitute criminal offenses under existing law, with ongoing proceedings against platforms generating non-consensual content through their AI models.

In Italy, a law from September 2025 criminalizes the distribution of deepfakes without consent, with sentences of 1 to 5 years imprisonment.

In the UK, creating intimate deepfakes without consent has been a criminal offense since February 2026, with a detection tool evaluation framework co-developed with Microsoft.

In the US, the TAKE IT DOWN Act signed in May 2025 requires platforms to remove non-consensual deepfakes within 48 hours of notification, with an obligation to identify and remove duplicates.

When to be genuinely concerned

Deepfakes warrant serious vigilance in four specific contexts: urgent financial requests received via video or call, shocking political content released just before a vote, unsolicited intimate communications, and any content pushing for an immediate reaction with no verifiable source.

Overhype exists: most viral deepfakes are quickly identified by fact-checking teams, and the most obvious fakes remain detectable by the naked eye without specialized tools.

The real danger is often the “liar’s dividend“: the ability to invoke the existence of deepfakes to deny a genuine compromising video, with growing credibility among the public.

Research from the University of Würzburg shows that politicians who deny a genuine scandalous video by labeling it a deepfake are perceived as stronger than those who apologize, even when the video is authentic.

The best immunity remains a culture of systematic verification: question the source before the content, wait for confirmation from several trusted outlets, and resist the pressure of urgency.

Conclusion

Deepfakes occupy an in-between space that our information culture has not yet fully absorbed: a serious threat in specific contexts, overestimated in others.

Detection technology is advancing, but it remains in a permanent race with generation technology, with the gap rarely favoring the defenders.

What works today: the verification reflex, deliberate friction before any urgent action, and the awareness that our perceptual instincts are no longer reliable arbiters.

You see a shocking video: ask yourself who wants you to believe this, and why now.

Have you ever spotted a deepfake in your news feed?

Share your experience in the comments.

FAQ: deepfakes in 2026

Can you detect a deepfake with the naked eye

Yes, but with limited reliability: humans succeed in 52 to 68% of cases according to studies, and only 0.1% of people tested are reliable against mixed content.

Hands, teeth and facial contours remain the most revealing areas.

Are detection tools reliable in 2026

In the lab, the best tools show 94 to 98% accuracy.

In real conditions against adversarial deepfakes, this figure drops to 63 to 85% depending on the tool: no solution is foolproof against content specifically designed to bypass detection.

What are the best detection tools available

Reality Defender (multimodal, 70 to 85% in production), Sensity AI (forensic, 98% in the lab), Intel FakeCatcher (blood flow analysis, 96% in the lab) and Pindrop Pulse (specialized audio, 99%) are among the best-performing solutions depending on the use case.

Are audio deepfakes hard to detect

Audio deepfakes are among the most easily detected by automated tools (84 to 89% in production for the best tools).

Humans, though, detect them less reliably than video.

Specialized tools like Pindrop remain highly effective in this specific area.

How do I protect my company from deepfake fraud

Establish a multi-channel verification protocol: any sensitive request received by video or call must be confirmed through an independent secondary channel.

Pre-agreed code words between trusted parties are a simple and highly effective measure for finance teams.

Is creating a deepfake illegal in France

Creating one alone remains a relative legal grey area.

Distributing deepfakes for defamation, non-consensual pornography or fraud is criminally punishable under existing law, and the European AI Act adds transparency obligations from August 2026.

Can deepfakes influence an election

Yes: AI-generated content spreads 90% faster than human-created content on social media, and a Brookings study estimates that deepfakes could reduce voter turnout by up to 7% in tight races, a margin that exceeds most close election results.

Are children exposed to deepfakes

Yes: intimate deepfakes targeting minors have more than doubled in 18 months according to the 2025 report by the Australian eSafety Commissioner.

Nudify apps accessible on smartphones require no technical skills, making limiting the public exposure of children’s photos a priority.

Can you prove a video is a deepfake in court

It’s possible with forensic analyses produced by tools like Sensity AI, but the proof remains complex to establish.

French and European courts are progressively recognizing these reports as evidence, and case law is still being built in most countries.

What is the “liar’s dividend” related to deepfakes

It’s the strategy of invoking the existence of deepfakes to deny a genuine compromising video: as the technology spreads and public distrust grows, bad-faith actors can challenge authentic evidence with growing credibility among the general public.

Related Articles

Reddit blocks AI scraping: what it means for LLMs and open source

On March 25, 2026, Reddit sent shockwaves through the AI community: the platform is shutting its doors to automated scrapers, requiring biometric verification for suspicious accounts, and removing 100,000 bot…

Claude Mythos: what the Capybara leak reveals about Anthropic’s next model

On March 26, 2026, two cybersecurity researchers stumbled across something Anthropic never meant to show: roughly 3,000 internal assets exposed publicly on the company’s blog, including draft posts revealing the…